Fee For Service versus Fee For Value Healthcare

The following definitions I found in the article “What Kaiser’s Acquisition of Geisinger Means For Us All,” Forbes, Robert Pearl M.D. May 31, 2023

There are a couple of terms within the article which I would like to point out. Fee For Service and Fee For Value. For clarity, Traditional Medicare uses Fee For Service methodology and Medicare Advantage uses Fee For Value methodology.

The following paragraphs were pulled from the Forbes article Question 3 “Will The Deal Work,” You are going to hear a lot about each manner of payment FFS and Capitulation (Fee For Value) and why each side giving healthcare thinks their methodology is better. Anything outside of Single Payer is commercial healthcare. Medicare sets fees and has its issues.

~~~~~~~~

Article: Most healthcare observers understand the inherent flaw in the “fee for service” (FFS) model is also its greatest appeal to providers: the more you do the more you earn. FFS is how nearly all financial transactions take place in America (i.e., provide a service, earn a fee). In medicine, however, this financial model results in frequent over-testing and over-treatment with minimal if any improvement in clinical outcomes, according to researchers.

AB: If you click on the link above, you end up here; Moving the Health Care System Away from Fee-for-Service, a short paragraph. There is not much there to discuss. So, I moved on to the links. If you click on the link “alternative payment, you end up at an article, “Wielding the Carrot and the Stick: How to Move the U.S. Health Care System Away from Fee-for-Service Payment,” Commonwealth Fund, Stuart Guterman, August 27, 2013. The first link in Gutterman’s paper “fail to provide incentives” does not link to anything. The second link “broad range” takes you to “100% of Americans agree: this page does not exist,’ but it might if we work together.” The third link, takes you a length paper “Bending the Curve: Person-Centered Health Care Reform – A Framework for Improving Care and Slowing Health Care Cost Growth,” Brookings, April 29, 2013 and no author that I can find. One out of the three links work and the articles linked to are a decade old. Much has transpired since 2013.

Article: The “value-based” alternative to FFS involves prepaying for care. A model often referred to as “capitation.” In short, capitation involves a single fee, paid upfront for all the medical care provided to a defined population of patients for one year based on their age and health status. The better an organization at preventing disease and avoiding complications from chronic illness, the greater its success in both clinical quality and affordability.

AB: The value-based plan in Medicare is called Medicare Advantage. Medicare Advantage evaluates each patient on a yearly basis (year 2022 valuation for year 2023) and submit the codes determined by the doctors within the Medicare Advantage plans to CMS MedPac. What has been happening over the years is the Medicare Advantage Commercial Insurance Plans has been over coding it patients. Medicare Advantage receives the payments and pays the doctors within their plans the same as commercial insurance is paid by Medicare.

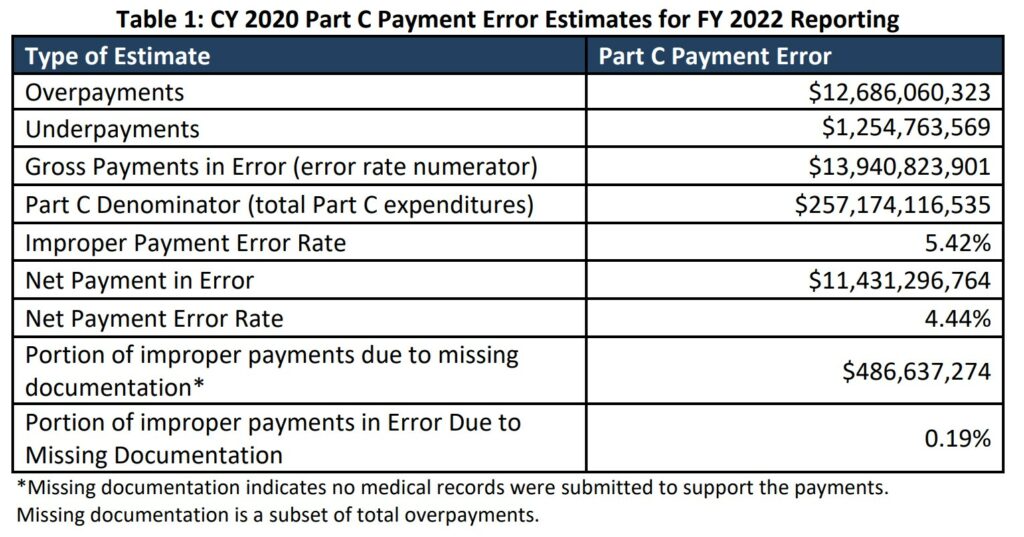

Within Medicare, the cost of care is far less than the cost of commercial healthcare. The coding by Medicare Advantage Plans has lead to capitulation-over-coding. For year 2020, commercial insurance over coding results to Medicare.

Article: Within the small world of capitated healthcare payments, there’s an important element that often gets overlooked. It makes a big difference who receives that lump-sum payment.

In the case of Kaiser Permanente, capitated payments are made directly to the medical group and the physicians who are responsible for providing care. In almost every other health system, an insurance company collects capitated payments but then pays the medical providers on a fee-for-service basis. Even though the arrangement is referred to as capitated, the incentives are overwhelmingly tied to the volume of care (not the value of that care).

In a mixed-payment model, doctors and hospitals invariably prioritize the higher paying FFS patients over the capitated ones. When I think about these conflicting incentives, I’m reminded of a prominent medical group in California. It had a main entrance for its fee-for-service patients and a second, smaller one off to the side for capitated patients.

AB: In 2022, Kaiser suffered a $4.47 Billion Net Loss. Rising Costs are Blamed for Kaiser Permanente’s Net Loss. Now I have talked about Medicare FFS and Medicare Advantage Fee For Value costing. Most doctors would rather have patients with Medicare Fee For Service healthcare insurance because there is less chance of the rejection game being played. And it does happen quite frequently with Commercial Healthcare Insurance which Medicare Advantage is also. The only difference here is discovered over coding by Advantage plans resulting in overcharges of ~$12 billion in 2020. Furthermore, Medicare Advantage plan over coding is bleeding the Medicare trust fund rapidly.

A thought? Lets go to Single Payer healthcare and eliminate healthcare insurance. Single Payer will set rates and hospital budgets, etc.

Article: I doubt the time spent with the patient—or the overall care provided—was equal for both groups. When income is based on quantity of care, not quality, clinicians focus more on treating the complications of chronic disease and medical errors rather than preventing them in the first place. Geisinger has walked this tightrope in the past, but as economic pressures mount, I fear doctors will find the two sets of incentives conflicting and difficult to navigate.

AB: Lets code properly and not deny, deny coverage which is apparent in commercial healthcare insurance.

Bending the Curve: Person-Centered Health Care Reform – A Framework for Improving Care and Slowing Health Care Cost Growth,” Brookings, No author, April 29, 2013

Rising Costs Blamed For Kaiser Permanente’s $4.47 Billion Net Loss, KFF Health News

(If there were a recent ‘Open Thread’ this would be posted there.)

A.I. Poses ‘Risk of Extinction,’ Industry Leaders Warn

NY Times – May 30

Leaders from OpenAI, Google DeepMind, Anthropic and other A.I. labs warn that future systems could be as deadly as pandemics and nuclear weapons.

A group of industry leaders warned on Tuesday that the artificial intelligence technology they were building might one day pose an existential threat to humanity and should be considered a societal risk on a par with pandemics and nuclear wars.

“Mitigating the risk of extinction from A.I. should be a global priority alongside other societal-scale risks, such as pandemics and nuclear war,” reads a one-sentence statement released by the Center for AI Safety, a nonprofit organization. The open letter was signed by more than 350 executives, researchers and engineers working in A.I.

The signatories included top executives from three of the leading A.I. companies: Sam Altman, chief executive of OpenAI; Demis Hassabis, chief executive of Google DeepMind; and Dario Amodei, chief executive of Anthropic.

Geoffrey Hinton and Yoshua Bengio, two of the three researchers who won a Turing Award for their pioneering work on neural networks and are often considered “godfathers” of the modern A.I. movement, signed the statement, as did other prominent researchers in the field. (The third Turing Award winner, Yann LeCun, who leads Meta’s A.I. research efforts, had not signed as of Tuesday.)

The statement comes at a time of growing concern about the potential harms of artificial intelligence. Recent advancements in so-called large language models — the type of A.I. system used by ChatGPT and other chatbots — have raised fears that A.I. could soon be used at scale to spread misinformation and propaganda, or that it could eliminate millions of white-collar jobs. …

Eventually, some believe, A.I. could become powerful enough that it could create societal-scale disruptions within a few years if nothing is done to slow it down, though researchers sometimes stop short of explaining how that would happen.

These fears are shared by numerous industry leaders, putting them in the unusual position of arguing that a technology they are building — and, in many cases, are furiously racing to build faster than their competitors — poses grave risks and should be regulated more tightly.

This month, Mr. Altman, Mr. Hassabis and Mr. Amodei met with President Biden and Vice President Kamala Harris to talk about A.I. regulation. In a Senate testimony after the meeting, Mr. Altman warned that the risks of advanced A.I. systems were serious enough to warrant government intervention and called for regulation of A.I. for its potential harms.

Dan Hendrycks, the executive director of the Center for AI Safety, said in an interview that the open letter represented a “coming-out” for some industry leaders who had expressed concerns — but only in private — about the risks of the technology they were developing.

“There’s a very common misconception, even in the A.I. community, that there only are a handful of doomers,” Mr. Hendrycks said. “But, in fact, many people privately would express concerns about these things.”

Some skeptics argue that A.I. technology is still too immature to pose an existential threat. When it comes to today’s A.I. systems, they worry more about short-term problems, such as biased and incorrect responses, than longer-term dangers.

But others have argued that A.I. is improving so rapidly that it has already surpassed human-level performance in some areas, and that it will soon surpass it in others. They say the technology has shown signs of advanced abilities and understanding, giving rise to fears that “artificial general intelligence,” or A.G.I., a type of artificial intelligence that can match or exceed human-level performance at a wide variety of tasks, may not be far off. …

In a blog post last week, Mr. Altman and two other OpenAI executives proposed several ways that powerful A.I. systems could be responsibly managed. They called for cooperation among the leading A.I. makers, more technical research into large language models and the formation of an international A.I. safety organization, similar to the International Atomic Energy Agency, which seeks to control the use of nuclear weapons.

In March, more than 1,000 technologists and researchers signed another open letter calling for a six-month pause on the development of the largest A.I. models, citing concerns about “an out-of-control race to develop and deploy ever more powerful digital minds.”

“Mitigating the risk of extinction from A.I. should be a global priority alongside other societal-scale risks, such as pandemics and nuclear war,” reads a one-sentence statement released by the Center for AI Safety, a nonprofit organization.

The brevity of the new statement from the Center for AI Safety — just 22 words in all — was meant to unite A.I. experts who might disagree about the nature of specific risks or steps to prevent those risks from occurring, but who share general concerns about powerful A.I. systems, Mr. Hendrycks said.

“We didn’t want to push for a very large menu of 30 potential interventions,” he said. “When that happens, it dilutes the message.”

The statement was initially shared with a few high-profile A.I. experts, including Mr. Hinton, who quit his job at Google this month so that he could speak more freely, he said, about the potential harms of artificial intelligence. From there, it made its way to several of the major A.I. labs, where some employees then signed on. …

ChatGPT took their jobs. Now they walk dogs and fix air conditioners.

Washington Post via Boston Globe – June 3

When ChatGPT came out last November, Olivia Lipkin, a 25-year-old copywriter in San Francisco, didn’t think too much about it. Then, articles about how to use the chatbot on the job began appearing on internal Slack groups at the tech start-up where she worked as the company’s only writer.

Over the next few months, Lipkin’s assignments dwindled. Managers began referring to her as “Olivia/ChatGPT” on Slack. In April, she was let go without explanation, but when she found managers writing about how using ChatGPT was cheaper than paying a writer, the reason for her layoff seemed clear.

“Whenever people brought up ChatGPT, I felt insecure and anxious that it would replace me,” she said. “Now I actually had proof that it was true, that those anxieties were warranted and now I was actually out of a job because of AI.”

Artificial intelligence has rapidly increased in quality over the past year, giving rise to chatbots that can hold fluid conversations, write songs and produce computer code. In a rush to mainstream the technology, Silicon Valley companies are pushing these products to millions of users and – for now – often offering them free.

Some economists predict the technology could replace hundreds of millions of jobs, in a cataclysmic reorganization of the workforce mirroring the industrial revolution. Skeptics say that this fear of job losses is overblown and that AI chatbots will become aids, allowing people to work faster.

For some workers, the impact is real. Those that write marketing and social media content are finding themselves in the first wave of people being replaced with tools like chatbots seemingly able to produce plausible alternatives to their work.

Experts say that even advanced AI doesn’t match the writing skills of a human: It lacks personal voice and style, and it often churns out wrong, nonsensical or biased answers. But for many companies, the cost-cutting is worth a drop in quality.

“We’re really in a crisis point,” said Sarah T. Roberts, an associate professor at University of California in Los Angeles specializing in digital labor. “[AI] is coming for the jobs that were supposed to be automation-proof.”

AI and algorithms have been a part of the working world for decades. For years, consumer-product companies, grocery stores and warehouse logistics firms have used predictive algorithms and robots with AI-fueled vision systems to help make business decisions, automate some rote tasks and manage inventory. Industrial plants and factories have been dominated by robots for much of the 20th century, and countless office tasks have been replaced by software.

But the recent wave of generative artificial intelligence – which uses complex algorithms trained on billions of words and images from the open internet to produce text, images and audio – has the potential for a new stage of disruption. The technology’s ability to churn out human-sounding prose puts highly paid knowledge workers in the crosshairs for replacement, experts said.

“In every previous automation threat, the automation was about automating the hard, dirty, repetitive jobs,” said Ethan Mollick, an associate professor at the University of Pennsylvania’s Wharton School of Business. “This time, the automation threat is aimed squarely at the highest-earning, most creative jobs that . . . require the most educational background.” …

Fred:

I had hopes you would be applying your remarks to healthcare which would have fit into my commentary. Instead you are off on a different topic appearing to be unrelated.

I noticed this A.I. piece this morning an considered it to be an urgent topic.

Feel free to remove it if you choose.

(If there were a recent ‘Open Thread’ I would have posted it there.)

Had a converstation half a lifetime ago with a close friend who had become a pediatrician, and was envious of the fee-for-service docs at his hospital who were really raking in huge earnings from doing fee-for-service work.

The application of a value based price formula for drugs, as opposed to a development and production cost formula, was used to justify $100,000 a year prescriptions:

http://www.nytimes.com/2006/02/15/business/15drug.html

February 15, 2006

A Cancer Drug Shows Promise, at a Price That Many Can’t Pay

By ALEX BERENSON

Doctors are excited about the prospect of Avastin, a drug already widely used for colon cancer, as a crucial new treatment for breast and lung cancer, too. But doctors are cringing at the price the maker, Genentech, plans to charge for it: about $100,000 a year.

Eat more fiber – Oats and Oat Bran.

This was the beginning of $100,000 a year drug pricing, with the rationale being value of drug treatment rather than cost of development and production of a drug. This rationale for drug pricing was and is especially important.

ltr:

I am well aware of what is going on today. I have about 20 posts I have done in the past on costs, pharma, etc in order to give you an answer. I do not write or talk generalities. Pricing of cancer medicines and its impacts

This has been going on far longer than you know. And I have been reporting on it for 3-4 years now. Mostly ignored.

“Two different messages from government, greater efficiencies in healthcare through consolidations as ACOs versus monopolistic pricing control in healthcare by large hospital and pharmaceutical corporations an unintended result. There is large amounts of inefficiencies, waste, and rent-taking in healthcare as well as in Medicare which is touted as the go-to by politicians and advocates of it. Lets not make a similar mistake, the creation of any forthcoming healthcare system must first address the costs of healthcare and then the delivery of it not ignoring the quality of the product and its outcome after treatment. Again Maggie Mahar was big on promoting this result emanating from any new system.” Revieing Heathcare Costs . . .

This is Novartis, Kymirah report as stated again:

To be redundant, value based analysis methodology considers the Costs of R&D gained, the Costs of production and expenditures relating to product commercialization during the time period, the Value of medicine to patients, health care system and society and Sufficient financial returns to incentivize future R&D programs gained (an overall cost reduction in treatment), in addition to other benefits, to determine the value of the drug to a person and society in which to set a price.

This is the argument being made. Roche’s Herceptin targets HER2-positive breast cancer, an aggressive cancer which occurs in younger women, and claims the benefits of treatment being particularly high thereby deserving of a higher price.

Can You Patent the Sun? Novartis applied the same “value-based” analysis to justify pricing for Kymriah used to treat unresponsive b-cell acute lymphoblastic leukemia when there are no other options for them or their families. It is a one-time treatment with follow-up treatments far less frequent than traditional therapies.

Unsupported Price Increase Report

It is all here Revieing Heathcare Costs . . . and one of many I have sitting on my screen.

Now the ICER: Unsupported Price Increase Report. The ICER uses the above and also the Grade System.

House Democrats Drug Price Strategy

ltr:

Did you look and see what the ICER said on the pricing? They are the ones who review drug pricing.

http://www.nytimes.com/2006/02/15/business/15drug.html

February 15, 2006

Until now, drug makers have typically defended high prices by noting the cost of developing new medicines. But executives at Genentech and its majority owner, Roche, are now using a separate argument — citing the inherent value of life-sustaining therapies.

If society wants the benefits, they say, it must be ready to spend more for treatments like Avastin and another of the company’s cancer drugs, Herceptin, which sells for $40,000 a year….

This is the point:

“Until now, drug makers have typically defended high prices by noting the cost of developing new medicines. But executives at Genentech and its majority owner, Roche, are now using a separate argument — citing the inherent value of life-sustaining therapies. “

Value pricing in medicine is distinctly immoral; a way of harming those who are unfortunately the most ill. No other developed country allows such a pricing formula for drugs or medical services.

The claim of costs of drug development pale in comparison to the expenditures for marketing. Then too, their development often springs off primary research by the government which they use for nothing.

http://www.nytimes.com/2004/09/06/books/06masl.html?ex=1252209600&en=1accf3fe4a08f287&ei=5090&partner=rssuserland

September 6, 2004

Indicting the Drug Industry’s Practices

By JANET MASLIN

THE TRUTH ABOUT THE DRUG COMPANIES

How They Deceive Us and What to Do About It By Marcia Angell, M.D.

http://www.nytimes.com/2005/05/06/opinion/a-serious-drug-problem.html

May 6, 2005

A Serious Drug Problem

By PAUL KRUGMAN

(Another interesting article on the impact of AI. Perhaps you can find a link to it.)

ChatGPT took their jobs. Now they walk dogs and fix air conditioners.

Washington Post via Boston Globe – June 3

… Some economists predict (new AI) technology could replace hundreds of millions of jobs, in a cataclysmic reorganization of the workforce mirroring the industrial revolution. Skeptics say that this fear of job losses is overblown and that AI chatbots will become aids, allowing people to work faster.

For some workers, the impact is real. Those that write marketing and social media content are finding themselves in the first wave of people being replaced with tools like chatbots seemingly able to produce plausible alternatives to their work.

Experts say that even advanced AI doesn’t match the writing skills of a human: It lacks personal voice and style, and it often churns out wrong, nonsensical or biased answers. But for many companies, the cost-cutting is worth a drop in quality. …

… “We’re really in a crisis point,” said Sarah T. Roberts, an associate professor at University of California in Los Angeles specializing in digital labor. “[AI] is coming for the jobs that were supposed to be automation-proof.”

AI and algorithms have been a part of the working world for decades. For years, consumer-product companies, grocery stores and warehouse logistics firms have used predictive algorithms and robots with AI-fueled vision systems to help make business decisions, automate some rote tasks and manage inventory. Industrial plants and factories have been dominated by robots for much of the 20th century, and countless office tasks have been replaced by software.

But the recent wave of generative artificial intelligence – which uses complex algorithms trained on billions of words and images from the open internet to produce text, images and audio – has the potential for a new stage of disruption. The technology’s ability to churn out human-sounding prose puts highly paid knowledge workers in the crosshairs for replacement, experts said.

“In every previous automation threat, the automation was about automating the hard, dirty, repetitive jobs,” said Ethan Mollick, an associate professor at the University of Pennsylvania’s Wharton School of Business. “This time, the automation threat is aimed squarely at the highest-earning, most creative jobs that . . . require the most educational background.”

In March, Goldman Sachs predicted that 18 percent of work worldwide could be automated by AI, with white-collar workers such as lawyers at more risk than those in trades such as construction or maintenance. “Occupations for which a significant share of workers’ time is spent outdoors or performing physical labor cannot be automated by AI,” the report said. …

ChatGPT took their jobs…