Modeling the Price Mechanism: Simulation and The Problem of Time

Today’s New York Times article on rapid online repricing by holiday retailers depicts a retail world starting to approach the “flash-trading” status of financial markets:

Amazon dropped its price on the game, Dance Central 3, to $24.99 on Thanksgiving Day, matching Best Buy’s “doorbuster” special, and went to $15 once Walmart stores offered the game at that lower price. Amazon then brought the price up, down, down again, up and up again — in all, seven price changes in seven days.

And:

The parrying could be seen with a Nintendo game, Mario Kart DS.

A week before Thanksgiving, the retailers’ prices varied, with Amazon selling it at $29.17, Walmart at $40.88, and Target at $33.99, according to Dynamite Data. Through Thanksgiving, as Target kept the price stable, Walmart changed prices six times, and Amazon five. On Thanksgiving itself, Walmart marked down the price to its advertised $29.96, which Amazon matched.

This made me think about a Greg Hannsgen post from last May on the Levy Economics Institute’s Multiplier Effect blog, a post I’ve been meaning to write about. It looks at the pricing mechanism based on how fast prices change/adjust.

The especially interesting thing about this post: It uses a dynamic simulation model to display the effects of slower and faster price adjustments, and lets you run the model yourself, right on the page, by moving a slider to change the speed of price adjustment and watch the results. (You need to install a browser plug-in from Wolfram, which only worked for me in Firefox under Mac OS X 10.6.8; it failed in Chrome.)

The gist:

You wonder what will happen when markets finally start working. How about, for example, a market that changes prices and wages quickly in response to fluctuations in demand? …

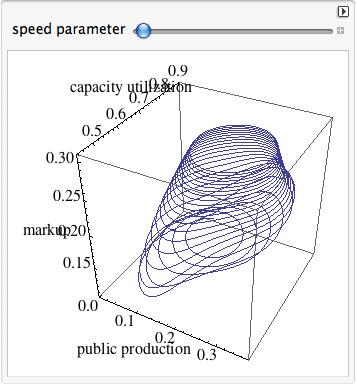

The pathway shown in the figure just below is followed by public production, capacity utilization, and the markup.

As you move the lever to the right, you are increasing a parameter that controls the speed at which the markup changes in response to high or low levels of customer demand.

What happens as the speed parameter is increased is that the economy’s pathway gradually changes until there is a relatively sudden vertical jump in the middle and much higher markup levels at the end—which means a bigger total rise in capital’s share.

Then:

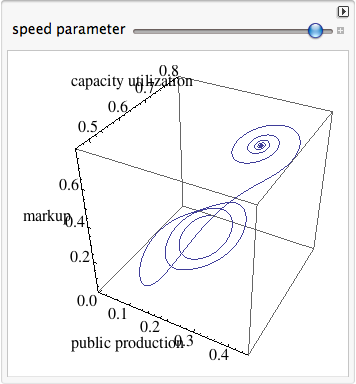

The next pathway is the one followed by a second group of three variables during the same simulation. This second group includes: money, the government deficit (surpluses are negative numbers in this figure), and the employment rate (total work hours divided by total hours supplied).

when the lever is all the way to the right, the pathway begins with an outward spiral, leading to a new inward spiral, and finally an employment “crash” of sorts that occurs as the center of the second spiral is reached. This occurs after the markup has reached very high levels, as seen in the earlier diagram.

That’s a pretty amazing set of conclusions that I don’t think could ever have emerged from mainstream economic modeling techniques. You’ll notice that equilibrium, in particular, is a decidedly problematic concept here. It only seems to emerge when things have gone off the rails and hit the edge of the known world — not in those comfortable middle grounds so fondly envisioned by mainstream models.

I am making no claims of validity for this model’s assumptions, techniques, or conclusions (though I find the conclusions fascinating and plausible) — that’s beyond me. What I want to talk about is the validity of this class or type of dynamic simulation model compared to those commonly employed in “textbook” economic analysis.

In particular it makes me think about some recent posts and comments by Nick Rowe that are (bless him) teaching posts at least partially in response to my constantly demonstrated inability to properly grasp those standard approaches. (No: I’m not being ironic.)

Nick laid out the textbook understanding of the pricing mechanism based on marginal cost of production with wonderful clarity and broad insight here. I want to suggest that the explanation’s key feature is its use of “short-term” and “long-term.” It’s all about time.

But looking at the figures above — which model time fluidly and continuously (realistically?) as opposed to depicting it in two vaguely defined “chunks” — I want to ask whether Nick’s explanation, and the models employed in that explanation, are sufficient (or even proper) to grasp the processes playing out in the marketplace. Could they predict the effects that we see above? Is that type of depiction and prediction even within their inherent realm of capability?

Yes, Hannsgen’s model employs some textbook constructs, but it deploys them in a model that is structurally, qualitatively different from “comparative static” textbook models. Maybe the modeling technique is the message. Or at least, the proper modeling technique is a necessary condition for imparting an accurate and useful message.

Reading Nick’s posts and those of other smart econobloggers and -commenters, I am constantly astounded at his (their) apparent ability to intuitively comprehend and mentally manipulate multidimensional (and multiconceptual) interplays that leave me flummoxed. But I still wonder: is that ability sufficient for Nick to representatively model, in his head — to intuitively understand — the complex interplay of factors at work? His frequent comments about holding one factor constant, and the importance and difficulty of simultanaeity in our thinking, suggest that the answer might be no.

When you add a minimum wage to a free-economy model, is he able to simulate, in his head, all the possibly resultant pathways through the multidimensional space of prices, labor inputs, capital inputs, and output quantities (not to mention redistribution feedback effects, or utility-related factors), all over time — a space where no factors are held constant?

Yes, I’m questioning Nick’s quantitative ability given the models employed to do this kind of simulation in his head. (Suggesting: it’s not just me!, though there is certainly a matter of degree.) But as a result, and also, I’m questioning the qualitative ability of those models as employed to enable such understanding in our limited minds (notably, mine).

As I said recently, science is about really understanding how things work — telling a convincing causative story — not (just) predicting what will (might) happen. (At the extreme, you could say that prediction is only useful for scientists as a test of understanding.) The textbook models seem to provide some predictive power, but you gotta wonder how much of that is false positives. And given that question, you have to ask how well they really “explain” how economies work — how much true understanding they provide.

In other words — back to my apparently congenital inability to really internalize and understand the textbook models — it’s not my fault! (Yes: now I am being ironic.)

All this raises one big question for me: why aren’t mainstream economists all over these kinds of dynamic simulation models? Why do we only see them at the fringes, in work by “heterodox” outsiders like Hannsgen and Keen, and in the ever-about-to-emerge work promised by the guys at the Santa Fe Institute? Why aren’t big, and (within limits) out-of-the-box thinkers like Nick, Scott Sumner, Tyler Cowen, etc. — people who show every indication of really wanting to understand — fascinated by the possibilities of this type of modeling? In the weather and climate business, textbook economic modeling techniques would be laughed out of the room. Are not economies of a similar complex, dynamic, emergent type with weather and climate systems — arguably even more so, and more complex, because economies include the game-theory grist of conscious intentions and expectations (about other people’s intentions and expectations)?

I recently corresponded with a econ Phd candidate who really wanted to work with and build such models for his thesis. He reported that everything about the institutional and intellectual structure of academic economics militated against his doing so. “Just grab a data set, build a model and pull some regressions, call it good and head for the tenure track.”

You don’t have to read Kuhn or Marx to wonder whether this isn’t the result of 1. the irresistible intellectual gravitational pull of institutionally sanctioned models however obviously flawed (miasma, phlogiston, epicycles, equilibrium), and 2. the undeniable (inherent?) effectiveness of existing textbook economic models and modeling techniques in perpetuating and amplifying the established power structures and (increasingly) unequal distribution of income and wealth — wealth that actively seeks to perpetuate and expand itself via institutional structures like universities. (No names, just initials: Mercatus Center.)

I’m not imputing moral corruption here (except perhaps institutional). I both prefer and tend to believe that most of us try to do right, “as God gives us to see the right.” Rather, I tend toward the quite plausible institutional explanation laid out so nicely by Chomsky in Manufacturing Consent. In my words: institutions that are dependent on, are part and parcel of, those larger structures of power and wealth, only hire and promote people who already — perhaps by their very nature — see “right” right. (Or right “right.”)

And who knows? They might be right. Maybe my personal incentive is just to show (myself?) how smart I am relative to the mainstream institutions. But based on the Aha! moments of intuitive understanding that I experience when I see and explore models like Hannsgen’s, I tend to doubt whether that’s the only thing at play.

Cross-posted at Asymptosis.

“In the weather and climate business, textbook economic modeling techniques would be laughed out of the room.”

Yes. Actually, textbook economic modeling techniques were “laughed out of the room” at least by 1969 (Ayres and Kneese) — if not 1950 (Kapp), 1923 (J.M. Clark) or even 1898 (Marshall).

steve:

You have done something I have never done with LSS in manufacturing. I think much of it is being able to apply the theorectical to the practical. Our modeling nvers proves anything. It only points in a direction and holds true only for the constant time, place, and variables. Tomorrow it may be different. A niice read.

Anyone outside of economics who has worked with differential equations is used to the kind of behavior one finds in such models. Equilibrium, which seems to be at the heart of economics theory, is something that may not exist or may only be approached asymptotically depending on the structure of the attractor. That’s why the typical economics argument sounds strangely timeless to outsiders – timeless in the sense of avoiding the issue of change as a function of time.

The economists blithely talk about computing the production equilibrium while the first thing a mathematician asks is whether such an equilibrium exists. It is a real sign of a lack of mathematical sophistication in economics. Even though time has passed them by, economists are sticking with Sir Isaac Newton’s approach to the two body problem and ignoring everything that has happened since with Laplace, Somerville, or Lagrange, let alone the more recent contributions by Einstein, Riemann and Hawkings.

It’s good to see an economist actually using some 18th century mathematics, rather than mere hand waving.

P.S. My first economics simulation, run on an IBM 1130 back in 1969, was based on the macro-economics equations in a high school economics text book. The simulation showed a messy oscillation in GDP and its related components. I was quite surprised. I ran it again with higher precision arithmetic which produced yet another, quite different, set of messy oscillations. It would take me a few years of college math to learn where my oscillations were coming from, and a few years more to learn something of the black art of numerical analysis which explained why my answers depended on the level of precision. Let’s hope a few more economists get serious about the mathematical underpinnings of their field.

This article really is food for thought. It is time someone wrote an application for tracking prices on Amazon and other sites. You’d choose items and set your desired bid. The app would repeatedly get the price from the seller’s web site, both logged in with your account and anonymously with new cookies and possibly through a proxy to avoid tracking. When your price is met, you can pounce.

I often do this, because prices bounce around so much. It can save lots of money, even though the basic idea is stupid. I really should be able to put out an RFP with a bid price and let the supplier’s fight for my business.

Steve: “All this raises one big question for me: why aren’t mainstream economists all over these kinds of dynamic simulation models?”

Google various combinations of the words: “economics simulation models/methods DSGE adaptive learning”.

“dynamic” is redundant, since most economic modelling is dynamic, and we use simulation where it is too hard to solve a model analytically, which is more likely to be the case in dynamic models.

An old paper by Brad DeLong shows that price flexibility can be destabilising: http://works.bepress.com/brad_delong/11/

Kaleberg: Google “existence proofs general equilibrium”

Steve: here’s how it works.

Economists use words, where they can. If words don’t work they use diagrams. If it’s too complex for diagrams they use math. If it’s too complex for math they use computer simulations.

Bloggers try much harder to say it in words, not diagrams, math, or simulations.

And I am not a representative sample of mainstream economists. I’m much worse at math and computers.

The whole short run/long run thing is because it’s hard to use words to talk about 3 things moving at once. So that’s how we do it in first year.

“But looking at the figures above — which model time fluidly and continuously (realistically?) as opposed to depicting it in two vaguely defined “chunks” — I want to ask whether Nick’s explanation, and the models employed in that explanation, are sufficient (or even proper) to grasp the processes playing out in the marketplace.”

Aaaaaaaaaargh!!!!!!!!!

I just Googled “dynamic optimisation in continuous-time economic models” and got 2 million hits.

I was trying to explain things simply and intuitively, and not resorting to math like all the other economists do!

This comment has been removed by a blog administrator.